NVIDIA DGX™ Systems

Designed for the

Age of AI Reasoning

End-to-End Deployment Services and Support

with AMAX, an NVIDIA DGX Elite Partner.

AMAX is an NVIDIA DGX AI Compute Systems Elite Partner

The highest level of DGX Competency

Discounts for Education & Inception Members

Find out if you qualify for special offers on DGX Systems

NVIDIA DGX™ H200

High-bandwidth memory and compute for scalable AI training, fine-tuning, and inference.

NVIDIA DGX™ B200

Blackwell-powered system for next-gen AI in a standard enterprise form factor.

NVIDIA DGX™ B300

Blackwell Ultra system built for MGX and enterprise racks, optimized for AI pipelines.

NVIDIA DGX™ GB200

Rack-scale system with Grace Blackwell for unified memory and accelerated AI performance.

NVIDIA DGX™ GB300

Liquid-cooled, rack-scale platform with 72 GPUs for large-scale AI reasoning and deployment.

Going On-Prem▼

Integrated Hardware + Software▼

Turnkey Solutions▼

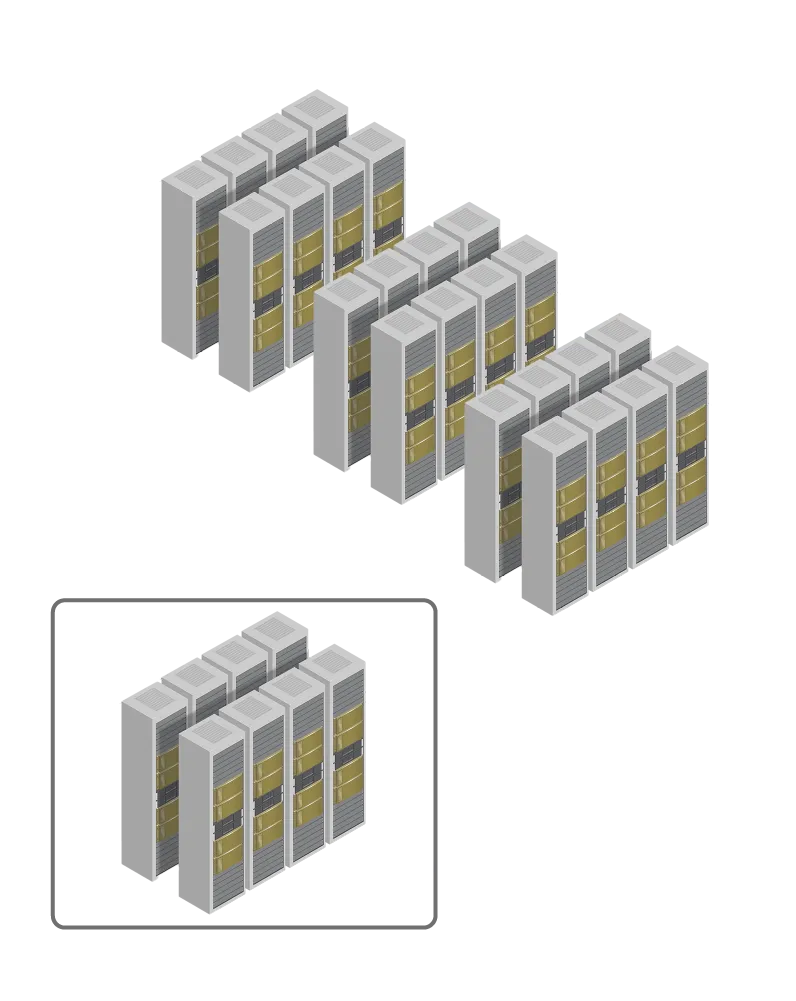

Designed to Scale▼

System Architecture Design

Networking Configuration

Global Deployment Services

Remote Testing & Validation

Performance Tuning & Support

"We feel well-supported on every aspect of our product development and expect further collaboration with AMAX."

"The AMAX GPU solution is working beautifully and we were very impressed with the quality."

"With the AMAX GPU cluster, the performance factor increase has been roughly 120-150x!"

Ultra-dense, Liquid-cooled open rack architecture for hyperscale AI

Questions

AMAX specializes in IT solutions for AI, industrial computing, and liquid cooling technologies, enhancing system performance and efficiency.

Our solutions enhance system efficiency, reliability, and scalability, empowering advanced data processing and reducing operational costs.

Our team conducts a detailed assessment of your infrastructure to integrate customized solutions seamlessly, enhancing performance and efficiency.

Yes, we offer comprehensive support across the lifecycle of our solutions, ensuring optimal performance and assisting with upgrades and technical issues.

Getting started is straightforward. Contact our sales team for a tailored consultation to align our solutions with your business needs.